How AI Search Engines Actually Work (And What It Means for Your Content)

Most AI SEO advice hands you a checklist — structure your content, earn backlinks, use schema markup. The checklist is correct. But without understanding why AI search works the way it does, every tip feels like a superstition rather than a strategy. This guide explains the actual mechanics of how AI search engines work: where they get their information, how a single prompt silently becomes dozens of searches, and why appearing in AI-generated answers is a probability you build — not a rank you hold.

Once you understand how the engine works, every optimization decision makes sense on its own terms. Whether you are building content for Google AI Overviews, ChatGPT, or Perplexity, these foundational mechanics apply across every major AI search platform in 2026 — and getting them right is what separates brands that grow in AI search from the ones that stay invisible.

In this guide, we will cover:

- Where AI search engines get their information (two distinct sources)

- What Query Fan-Out is and why it completely changes how content coverage works

- Why AI citations are probabilistic rather than positional

- The three signals that increase your probability of being cited

- How to map these mechanics to a coherent strategy

How AI Search Engines Actually Work in 2026

How AI Search Engines Actually Work in 2026

Where Does AI Get Its Information?

Before you can optimize for AI search, you need to understand that AI search engines do not pull from one pool of information — they pull from two fundamentally different sources. Treating them as the same thing is the first strategic mistake most brands make.

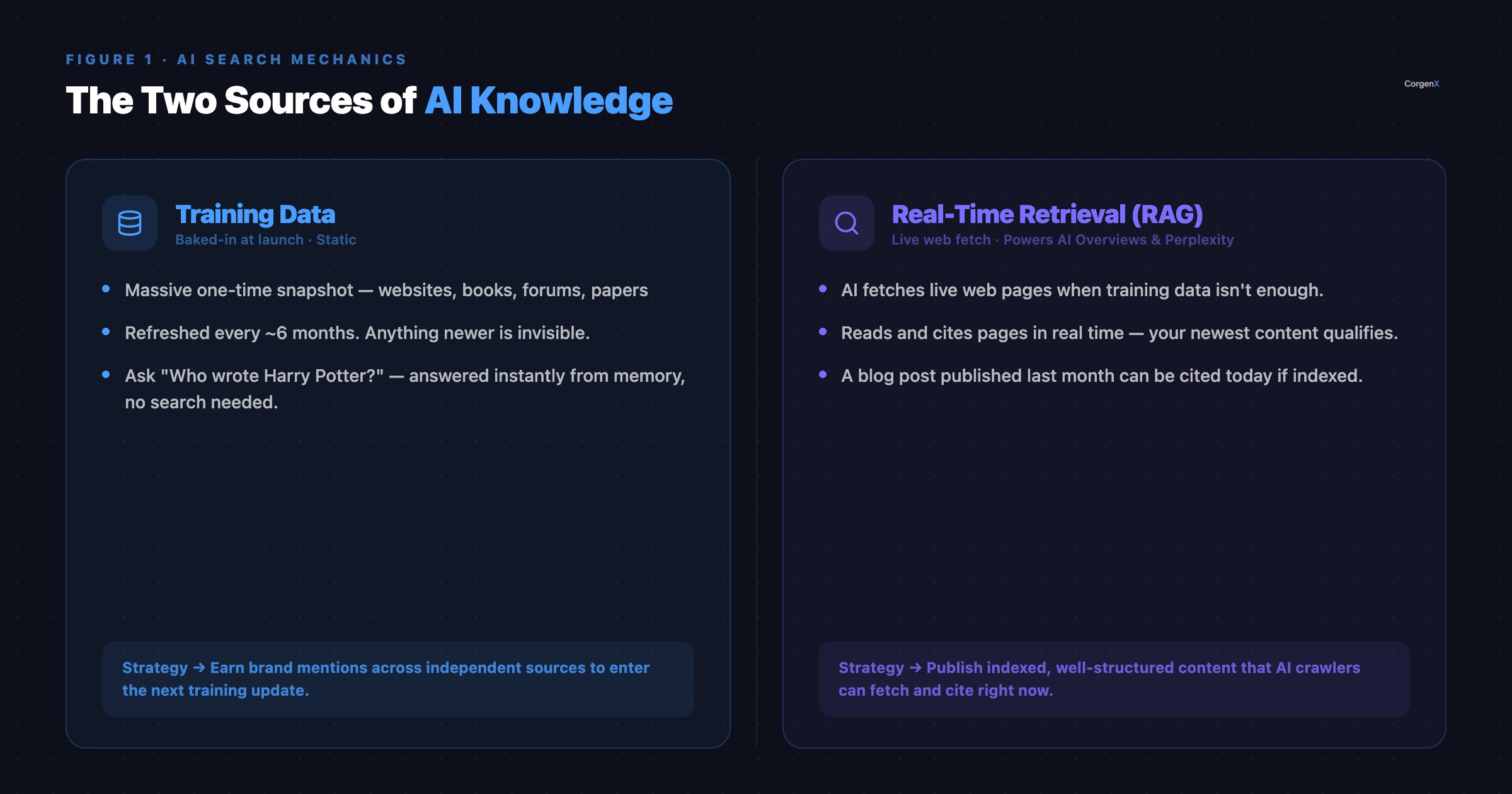

Figure 1: The Two Sources of AI Knowledge — Training Data (baked in at model launch) and Real-Time Retrieval (fetched on demand during each query). Your content can influence both, but through very different mechanisms.

Figure 1: The Two Sources of AI Knowledge — Training Data (baked in at model launch) and Real-Time Retrieval (fetched on demand during each query). Your content can influence both, but through very different mechanisms.

Source 1 — Training Data

Training data is the foundation of every AI model. Before ChatGPT, Gemini, or Claude launched, engineers fed the model a massive one-time snapshot of the internet — websites, books, academic papers, Reddit threads, forum discussions, video transcripts, official documentation. The model learned from all of it.

This knowledge is baked in at training time. It does not update in real time. Most major AI models refresh their training data roughly every six months, which means anything published or changed after the cutoff is completely invisible to the model's base knowledge.

What this looks like in practice:

Ask any AI chatbot "Who wrote Harry Potter?" and it answers instantly, without searching. That is training data — the model learned that fact during training and recalls it on demand. But ask it "What did Virat Kohli score in yesterday's match?" and the model hits a wall. That event happened after its training cutoff, and no amount of prompting will surface accurate real-time data from the base model alone.

Why this matters for your brand: If your company, product, or point of view is mentioned consistently across enough high-quality, independent sources — publications, reviews, forums, industry reports — you can eventually become embedded in training data. When the model is next updated, your brand exists in its base knowledge and can be surfaced without any live search at all.

Source 2 — Real-Time Retrieval (RAG)

When training data is not enough — because the information is too recent, too niche, or too specific — AI search engines use a technique called Retrieval-Augmented Generation (RAG). The model goes out to the live web, fetches relevant pages in real time, reads them on the spot, and builds its answer from what it finds.

RAG is the mechanism powering Google AI Overviews, Perplexity's live results, and ChatGPT's web browsing mode. It is also where your content can be picked up right now, regardless of how new your site is or how long you have been publishing.

What this looks like in practice:

Imagine you run a fintech startup that launched a new UPI payment feature last month. No AI model was trained on that feature — it simply does not exist in any model's base knowledge. But if your blog post explaining the feature is live, well-structured, and indexed, the AI can fetch that page during real-time retrieval and cite it directly in its answer.

Why this matters for your brand: RAG gives you two distinct optimization levers. Lever one is training data — be mentioned across enough independent sources that the AI eventually knows your brand without searching. Lever two is real-time retrieval — publish indexed, structured, accessible content that AI crawlers can fetch on demand. Your traditional SEO skills (crawlability, content structure, authority signals) power lever two directly.

Query Fan-Out: One Prompt, Dozens of Searches

This is arguably the most important concept in AI search that most content guides skip entirely. Understanding it will immediately change how you think about topic coverage and content depth.

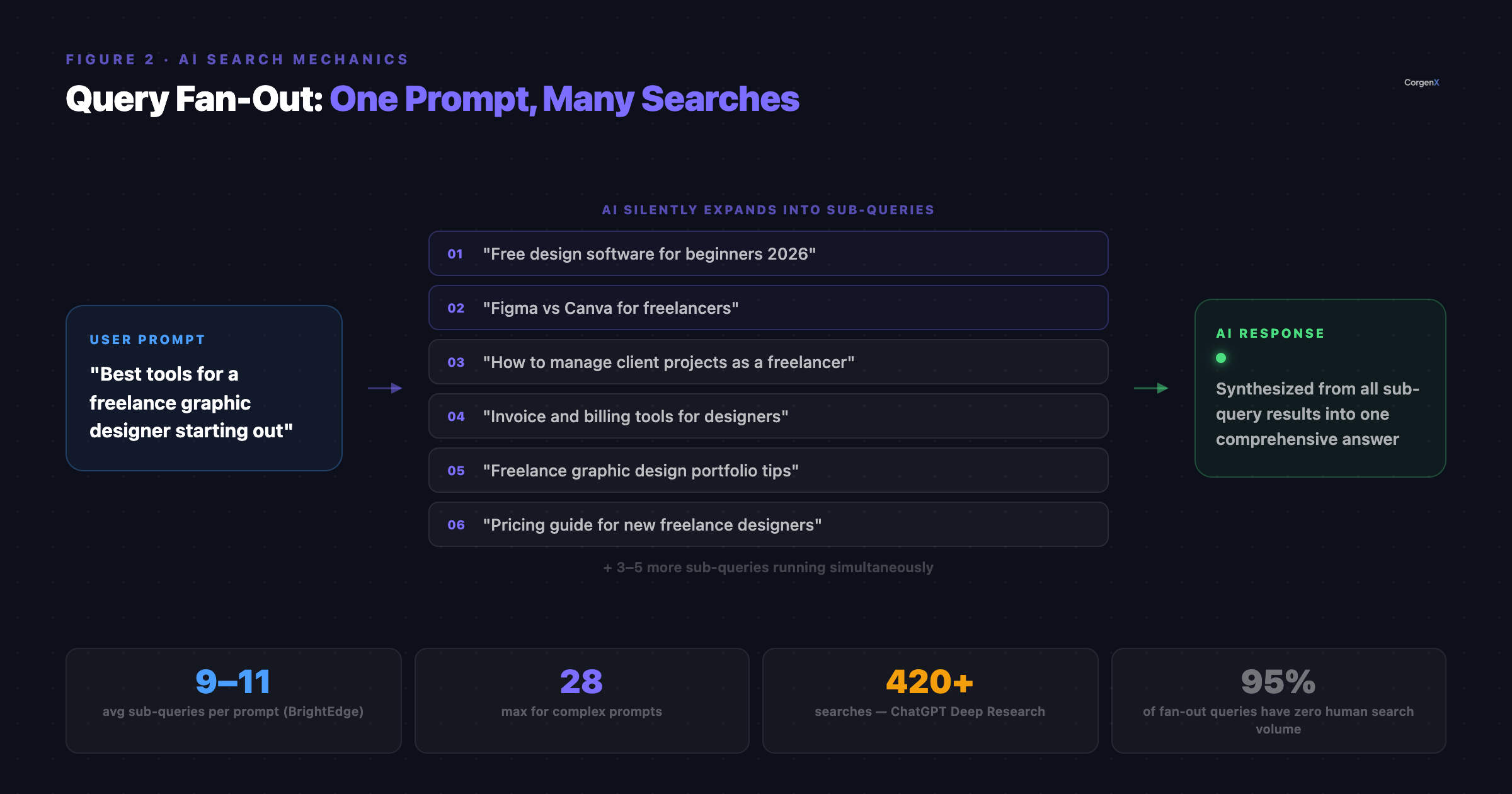

When a user types a prompt into an AI search engine, the AI does not search for exactly what was typed. It expands the prompt into multiple smaller sub-queries that run simultaneously in the background. It then pulls answers from all of those searches and synthesizes them into a single, comprehensive response.

This expansion process is called Query Fan-Out — and it fundamentally reshapes what "covering a topic" means in 2026.

Figure 2: Query Fan-Out in Action — A single user prompt silently expands into multiple sub-queries. Content that covers enough of those sub-topics gets selected; content that covers only some of them loses to competitors who cover all of them.

Figure 2: Query Fan-Out in Action — A single user prompt silently expands into multiple sub-queries. Content that covers enough of those sub-topics gets selected; content that covers only some of them loses to competitors who cover all of them.

How Search Evolved to Get Here

The mechanics of search have changed significantly across three distinct eras:

| Era | How It Worked |

|---|---|

| Classic Search | 1 query typed → 1 ranked list of results returned |

| Intent Matching Era | Many query variations → same results (search engines grouped by intent) |

| AI Search Era | 1 prompt → dozens of AI-generated sub-queries, all running in parallel |

What Fan-Out Actually Looks Like

Someone types: "Best tools for a freelance graphic designer starting out"

The AI does not search that phrase. Behind the scenes, it silently generates and fires queries like:

- "Free design software for beginners 2026"

- "Figma vs Canva for freelancers"

- "How to manage client projects as a freelancer"

- "Invoice and billing tools for designers"

- "Freelance graphic design portfolio tips"

- "Pricing guide for new freelance designers"

- "Best laptop specs for graphic design"

- …and several more, all running simultaneously

It then pulls content from every one of those searches and assembles a single, comprehensive answer. The user sees one clean response. The AI ran a full research operation.

The Data Behind Fan-Out

The scale of this process is significant enough to change your content strategy entirely:

- A typical prompt generates 9–11 fan-out sub-queries on average, according to BrightEdge AI search behavior analysis

- Complex research prompts can generate as many as 28 simultaneous sub-queries (BrightEdge)

- ChatGPT's Deep Research mode ran over 420 searches for a single high-intent purchasing query, as independently documented by AI research journalists

These numbers represent how seriously AI engines treat comprehensiveness. An AI that runs 420 searches before answering is actively hunting for every relevant sub-topic it can find. If your content covers some of those sub-topics and not others, you lose to a source that covers all of them.

The Critical Nuance — Do Not Misuse Fan-Out Data

Here is where a lot of early "AI SEO" advice goes wrong: fan-out queries are synthetic. The AI generates them on the fly, based on each specific prompt. They are inconsistent — the same user prompt may generate slightly different sub-queries on Monday than it does on Thursday. And over 95% of fan-out sub-queries have zero measurable human search volume.

Think of fan-out queries like a chef's mental checklist when tasting a dish before service. Before sending it out, a good chef mentally runs through: Is it salty enough? Is the acidity balanced? Does it need more texture? Is it at the right temperature? No diner walks in and orders "mid-acidity balance" — but the chef considers it anyway. These internal quality checks are not menu items.

What to do instead: Do not treat fan-out queries as a new keyword list to target individually. Treat them as a signal about which sub-topics the AI considers necessary for a complete, authoritative answer on a given subject. Use them to audit your content coverage. If the AI fans out into eleven sub-topics and your content addresses seven, you are losing to a competitor who addresses all eleven — even if your domain authority is higher.

AI Citations Are Probabilistic, Not Fixed

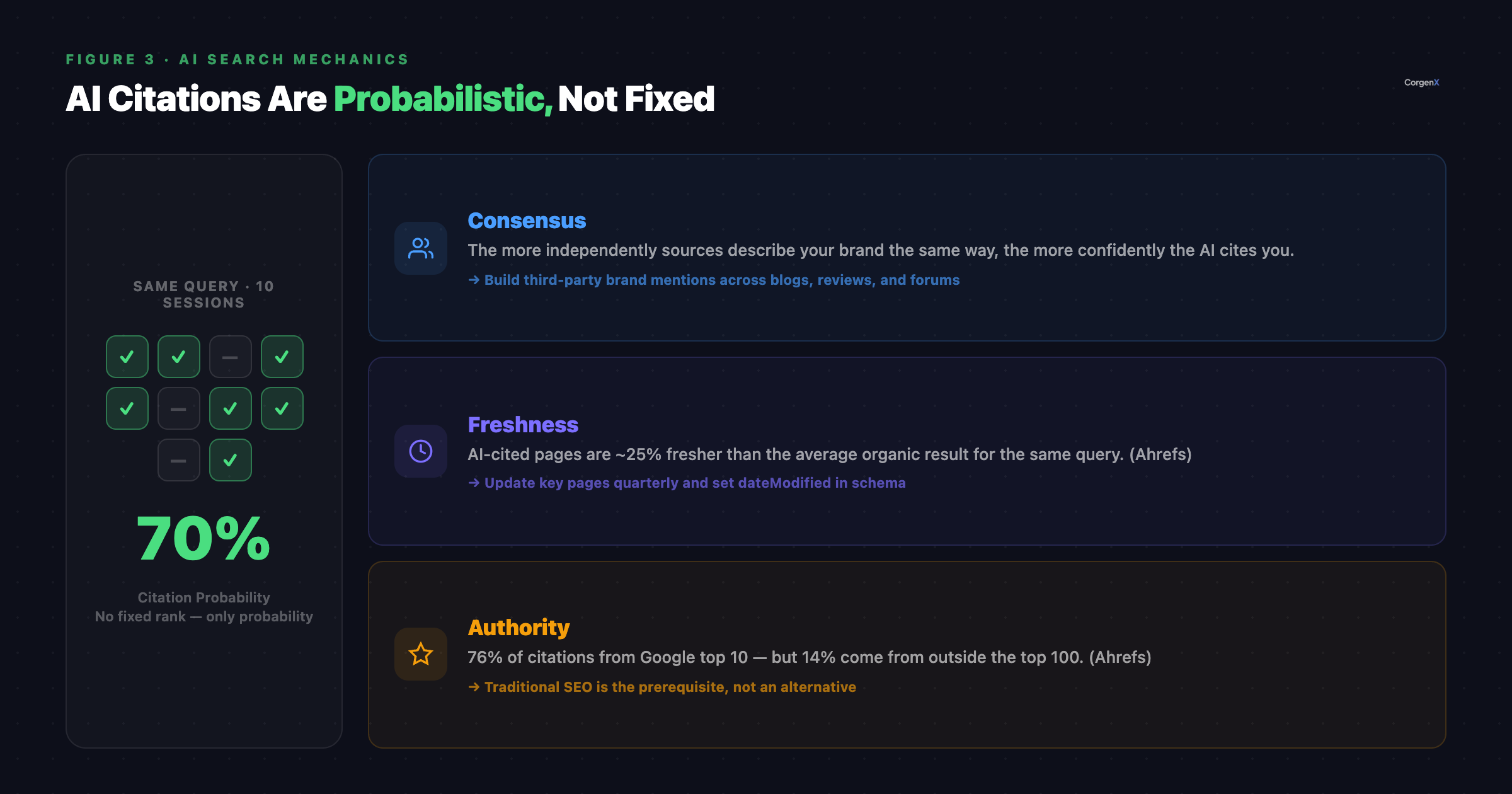

In traditional search, you either rank at position one or you do not. The result is deterministic — search the same query twice and the ranking does not change between attempts.

AI citations do not work this way. They are probabilistic.

Figure 3: AI Citations as Probability — Rather than holding a fixed rank, your content earns a likelihood of appearing as a citation. The same query asked on different days may cite different sources depending on real-time retrieval results and model temperature.

Figure 3: AI Citations as Probability — Rather than holding a fixed rank, your content earns a likelihood of appearing as a citation. The same query asked on different days may cite different sources depending on real-time retrieval results and model temperature.

Why Citations Vary

This is partly a technical feature of how AI language models generate output. They use a setting called "temperature" that intentionally introduces a degree of randomness into every response. This means the same query, asked twice, may produce slightly different phrasing — and slightly different source citations.

Think of it like ten journalists independently writing round-up articles on "Best project management tools for remote teams." Your product might get mentioned in seven of those articles but not all ten — because each journalist pulled slightly different sources, weighted different criteria, and worked from slightly different research. AI citations work the same way. Ask the same question on Monday and Thursday and you might appear in six out of ten responses, not every single one.

This has a profound strategic implication: there is no position one to chase. The goal shifts from "ranking" to "increasing the probability of being cited." It is a visibility score, not a leaderboard slot.

The Three Signals That Increase Citation Probability

1. Consensus

The more consistently your brand is described the same way across multiple independent sources, the more confidently AI models repeat that description.

If fifteen different blogs, review sites, Reddit threads, and industry forums all describe your SaaS tool as "the most intuitive CRM for solopreneurs," the AI treats that as established fact and cites it with confidence. If only your own website makes that claim — the AI hesitates, or skips it entirely.

The implication: Brand mention campaigns, PR placements, industry forum participation, and third-party review building are no longer soft marketing activities. They are direct inputs into your AI citation probability. Consensus across independent sources is one of the highest-leverage investments you can make in the AI search era.

2. Freshness

AI-cited content tends to be significantly more recent than what appears in traditional organic search results. According to Ahrefs research on AI Overview citations, pages cited in AI-generated responses are approximately 25% fresher than the average page ranking for the same query in standard Google results.

AI search engines are specifically designed to surface current, accurate information — and they actively favor recently updated content, particularly for fast-moving topics like technology, finance, health, and marketing.

The implication: Regularly updating your key pages is not just good SEO hygiene. It directly improves your AI citation probability. Add a dateModified field to your schema markup and make genuine, substantive updates to your most important articles every quarter. Adding new statistics, refreshing examples, and updating recommendations all count as signals of freshness.

3. Authority

Authority remains enormously important in AI citation selection — but with a nuance that creates a genuine opportunity for brands outside the top of Google's rankings.

According to Ahrefs data, 76% of AI overview citations come from pages already ranking in Google's top 10. Traditional organic authority carries massive weight, and your on-page and off-page SEO foundation directly influences how often you are selected as an AI source.

But here is the opportunity: the same Ahrefs study found that 14% of cited pages do not rank in Google's top 100 at all. For platforms like ChatGPT and Perplexity, the overlap with Google's organic results is even lower. This means brands that are not dominant in traditional search — smaller companies, specialist publishers, newer entrants — still have a documented, real path into AI visibility.

The entry point is not raw domain authority. It is structured content, specific expertise signals, and freshness on sub-topics where larger competitors have shallow or outdated coverage.

How the Full AI Search Process Works — End to End

Now that each piece is clear, here is the complete sequence from the moment a user types a prompt to the moment a response is generated:

- User types a prompt — a question, a research task, a comparison

- AI checks training data — surfaces what it already knows from its last training update

- AI fires real-time web searches — for anything too recent, too specific, or requiring verification

- Fan-out kicks in — that one prompt becomes 9 to 28 sub-queries running in parallel

- Results are pulled, scored, and merged — content evaluated for relevance, recency, and authority

- A probabilistic response is generated — weighted by consensus, freshness, and authority signals

Every step in this process maps directly to a strategy you can execute:

| AI Search Mechanic | What It Means for Your Content Strategy |

|---|---|

| Training data | Earn consistent brand mentions across independent third-party sources |

| Real-time retrieval (RAG) | Publish indexed, structured, AI-accessible content that crawlers can fetch |

| Query fan-out | Cover your topic deeply enough to satisfy all sub-queries, not just the main keyword |

| Probabilistic citations | Build consensus, freshness, and authority — not just a ranking position |

Putting It All Together: Why Every AI SEO Tip Actually Makes Sense

Understanding these mechanics makes the entire landscape of AI SEO advice coherent rather than arbitrary.

"Earn more brand mentions" — now you know why. Consensus across independent sources drives citation probability. Every press mention, third-party review, forum discussion, and industry report is a measurable input into the probabilistic citation process.

"Cover your topics deeply" — now you know why. Fan-out means the AI looks for comprehensive coverage across every relevant sub-topic simultaneously. Content that covers the main keyword but leaves six sub-topics unaddressed loses to a competitor who covers all of them — even if your domain authority is higher.

"Keep investing in traditional SEO" — now you know why. Seventy-six percent of AI citations still come from pages ranking in Google's top 10. Your core web vitals performance, backlink authority, and technical SEO health are not obsolete — they are the prerequisite for AI search visibility, not an alternative to it.

These three pillars — brand consensus, comprehensive topic coverage, and organic authority — are not random tips. They are direct strategic responses to the mechanics of how AI search engines actually work.

What This Means If You Are Running a Business Website

For most business owners and marketing teams, this translates into three immediate priorities.

First, audit your topic coverage. Pick your three most important target topics. Search each one in ChatGPT or Perplexity with a complex prompt. Look at the response and ask: which sub-topics did the AI address that your content does not? Those gaps are where fan-out is pulling from competitors instead of you.

Second, build brand signals off your own site. If your brand is only described on your own website, the consensus signal is weak. A single PR placement, a G2 review campaign, or active participation in a niche forum can start building the multi-source consensus that AI models look for.

Third, treat content freshness as infrastructure. Set a quarterly review cycle for your highest-traffic and highest-intent pages. Update statistics, refresh examples, revise any recommendations that have changed. Freshness is not just a hygiene task — it is a citation probability lever.

At CorgenX, our SEO services are built around exactly this framework — from AI-readiness audits and topic coverage analysis to off-site brand mention strategies designed for the way AI search actually works. If your content is technically strong but not appearing in AI-generated answers, the mechanics above are the most likely explanation.

What Comes Next

Understanding the underlying mechanics is the foundation — but there is a follow-up question most readers have at this point: does all of this work the same way on ChatGPT, Perplexity, and Google AI Overviews?

The honest answer: not even close. Each platform indexes content differently, uses different crawlers, weights freshness and authority differently, and attracts different user intent profiles. A strategy built purely around Google AI Overviews will miss the growing research and purchasing audience using Perplexity and ChatGPT daily.

Platform-specific optimization is the natural next step — and it is where the most significant competitive gaps are opening in 2026.

FAQs

What is the difference between AI training data and real-time retrieval?

Training data is the large snapshot of the internet baked into an AI model before launch — it does not update in real time and is typically refreshed every six months. Real-time retrieval (RAG) is what happens when the AI goes out to the live web to fetch current information for a specific query. Both sources can include your content: training data through consistent brand mentions across independent sources over time, and real-time retrieval through well-structured, indexed, accessible content that AI crawlers can fetch on demand.

What is Query Fan-Out in AI search?

Query Fan-Out is the process by which an AI search engine expands a single user prompt into multiple simultaneous sub-queries running in the background. According to BrightEdge AI search research, a typical prompt generates 9–11 sub-queries; complex prompts can generate up to 28. ChatGPT's Deep Research mode has been independently documented running over 420 searches for a single high-intent purchasing query. The AI synthesizes results from all of those sub-queries into one response, which means content that covers a topic comprehensively — across all relevant sub-topics — is more likely to be selected as a citation source than content that only covers the main keyword.

Why are AI citations probabilistic rather than fixed?

AI language models use a temperature setting that introduces controlled randomness into responses, meaning the same query asked twice may produce different output — including different citations. Additionally, real-time retrieval pulls from a variable pool of live sources, so citation outcomes shift based on what is indexed, how fresh it is, and how the fan-out sub-queries resolve on any given day. The strategic goal is to increase your probability of being cited — through consensus, freshness, and authority — rather than targeting a specific position.

How does consensus affect AI citation probability?

If your brand, product, or expertise claim is described consistently across multiple independent sources — reviews, forums, publications, industry reports — AI models treat that description as established and repeat it with confidence. A claim that only appears on your own website carries far less citation weight. Building brand consensus through PR, community participation, and third-party coverage is a direct input into your AI citation probability, not just a branding exercise.

Does traditional SEO still matter for AI search visibility?

Yes — it is the prerequisite, not an alternative. Ahrefs research shows that 76% of AI overview citations come from pages already ranking in Google's top 10. Your organic SEO foundation — technical health, backlink authority, and E-E-A-T signals — directly influences your AI search visibility. The 14% of cited pages that do not rank in Google's top 100 (also per Ahrefs) represent a real opportunity, particularly on niche sub-topics, but they are the exception rather than the rule.

What does content freshness have to do with AI citations?

AI search engines actively favor recently updated content, particularly for fast-moving topics. According to Ahrefs research, pages cited in AI-generated responses tend to be approximately 25% fresher than the average organic search result for the same query. Adding a dateModified field to your schema markup, updating statistics and examples quarterly, and refreshing recommendations as your industry evolves are all direct inputs into your AI citation probability — not just good SEO hygiene.

How is AI search different from traditional Google search?

Traditional Google search returns a ranked list of links that users click through. AI search generates a synthesized, natural-language answer that cites sources inline — often without requiring the user to leave the results page at all. The metric that matters shifts from ranking position and click-through rate to citation rate and brand mention frequency in AI responses. Both systems reward authority and relevance, but AI search additionally rewards comprehensiveness, consensus, and freshness in ways that traditional ranking algorithms do not weight as heavily.

Can a smaller or newer website get cited in AI search results?

Yes. Ahrefs data shows that while 76% of AI citations come from pages in Google's top 10, 14% come from pages outside the top 100 — and for platforms like ChatGPT and Perplexity, the overlap with Google's organic rankings is lower still. Smaller websites can earn AI citations by publishing highly structured, answer-first content on specific sub-topics where larger competitors have shallow, outdated, or missing coverage. Freshness and specificity can outweigh raw domain authority on niche queries, which is one of the most significant strategic opportunities for growing brands in 2026.

What should I do first to improve my AI search visibility?

Start with a topic coverage audit: pick your three most important topics, run complex prompts about each in ChatGPT or Perplexity, and identify which sub-topics the AI addresses that your content does not. Those gaps are where fan-out is pulling from competitors instead of you. Fixing coverage gaps produces faster, more measurable AI visibility improvements than most technical optimizations, because you are directly addressing the comprehensiveness signal that fan-out rewards.

Sources

- Ahrefs — AI Overviews Citation Study: citation freshness (25% fresher), ranking overlap (76% from Google top 10, 14% outside top 100)

- BrightEdge — AI Search Behavior Analysis: query fan-out averages (9–11 sub-queries per prompt, up to 28 for complex prompts)

- Independent AI Research — ChatGPT Deep Research mode: 420+ searches documented for a single high-intent purchasing query

Related Articles

Ready to Scale?

Our high-performance web solutions and SEO strategies are designed to deliver results.

Check out our services